Explained: What will Google Tensor do on the Pixel 6?

Buying a Google Pixel smartphone is a no-brainer for many Android users. The cutting-edge cameras, timely software and security updates, and clean Android experience that is becoming a rarity in the Android market despite so many OEMs, make the Pixel smartphones hard to ignore. But for a smartphone that is built and designed entirely by Google and not by any of their OEMs partners as was the case with Nexus phones, Google was still overly dependent on third-party chipmakers such as Qualcomm for their smartphones most important piece of hardware- SOC.

That has finally changed with the latest lineup of Google smartphones-- Pixel 6 and Pixel 6 Pro, which runs on Google’s first custom SoC, called Tensor. According to Google, the new SoC has been designed keeping in mind the AI and ML work they have been doing with the Google Research team.

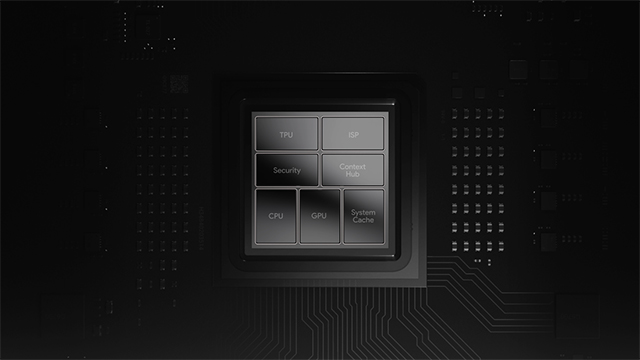

At the heart of Tensor SoC is Google’s Tensor Processing Unit (TPU), a matrix processor that specializes in neural network workloads. It already powers several Google products including Assistant, Translate, Photos, and Gmail. According to Google, “TPUs can’t run word processors, control rocket engines, or execute bank transactions, but they can handle the massive multiplications and additions for neural networks, at blazingly fast speeds.”

Why custom SoC matters

There is no denying that it was one less headache for them, but not having an in-house SoC has its limitations. Ask Apple? The flexibility of designing and creating custom chipsets as per your software is what led Apple to shift from Intel SoCs to their in-house M1 chipset for their MacBook devices. The M1 chipset is now a series with two additions- M1 Pro and Mi Max.

Designing custom SoC was all the more important for Google as it has been trying to perfect the AI experience for smartphone users. In a nutshell, it provides the new Pixel smartphones with advanced hardware for better image processing and voice recognition.

What is Tensor’s role in AI processing?

Though Tensor is built like any other premium mobile SoC, as per Google it brings more advanced state-of-the-art machine learning (ML) models ever seen on the Pixel devices. This will improve the accuracy of the Automatic Speech Recognition (ASR) used in Google Assistant to respond to quick queries, claims Google. Also, it will allow Pixel phones to use ASR for applications with longer audio input such as Recorder or Live Caption. Similarly, Tensor will enable Live Translate in videos using on-device speech and translation models.

What’s unclear is why Google needed a whole new chip to do this. The company is claiming that current chips cannot generate this kind of processing power, but the company’s demos were far from revolutionary.

What’s Tensor’s role in camera and security?

With Tensor, Google is hoping to take computational photography to new heights. According to the company, Tensor can handle photography tasks faster, as the chip’s subsystems work better now.

It powers a new camera feature called Motion mode, which allows users to add or relive blue from photos. Also, Google has integrated its HDRNet image enhancement algorithm directly into the chip, so it works with all video modes and delivers 4K videos at 60fps with accurate colors. It will also improve face detection.

To make Pixel phones more secure and protect sensitive user data, Tensor has a security core, which is a CPU-based subsystem that works with Google’s dedicated security chip, Titan M2. Google further claims that Titan M2 can thwart attacks, such as electromagnetic analysis, voltage glitching, and laser fault injection.